NLP Tutorial

Before diving right into Natural Language Processing(hereafter referred as NLP) details, let me take this chance to put forth the context for NLP.

Let us go through some of our daily experiences which we might have noticed them as just some of the features an application is providing but not as NLP applications.

- Did you notice that facebook has shown you an advertisement of buying something related to your recent status updates?

- Did you notice Google classifying your emails to Primary, Social, Updates, Spam etc. ?

- Did you notice your keyboard in smartphone that has learnt the patterns or words of your text input ?

- Did you notice Google giving you results, suggested queries when you made a search ?

- Did you notice that a lot around you are adjusting themselves to suit your thoughts and expressions, at least that is related to technology ?

Well, NLP is behind all these, not entirely but for the things where there is some data in the form of text.

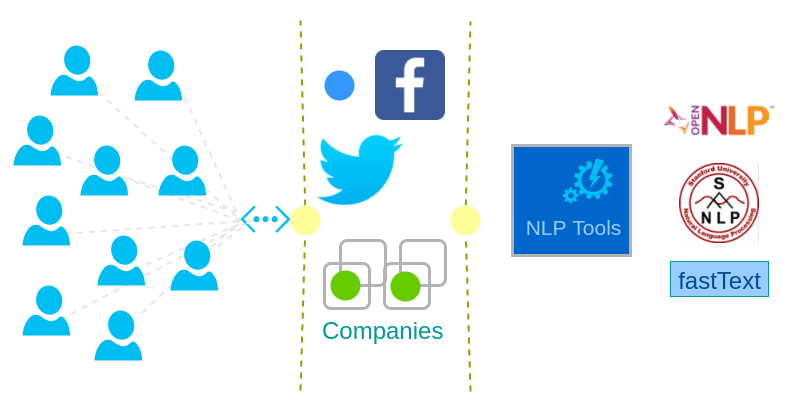

All major companies are trying to understand their customers’ buying patterns, their spending behavior, and everything that is needed to promote their goods to the customer and make a good sale. With the degree and magnitude of customers active in the social media, companies have found a way to learn more about their customers with the help of Sentiment Analysis. NLP is not only being used for text analytics, but also in replacing humans with bots for interactive engagement with customers. Chatter Bots have evolved to understand the language, context and meaning of text (or speech that is converted to text) and interact with user to provide necessary information.

Traditional word processor operations considered text as only a sequence of symbols. They never tried to learn the principle elements of a language which is the basis and aggregated the language. Also then, incorporating these features into word processors demanded lot of computation power and task specific algorithms. With the rise of machine learning and relatively massive computational power at low costs made lot of libraries and tools to aim at easing out Natural Language Processing.

Introduction of machine learning algorithms like Maximum Entropy model, Naive Bayes, etc., helped a lot in the realization of training a model against a data corpus, with competitive time and accuracy.

For processing natural languages like English, Spanish, Hindi, Chinese, Russian etc. NLP needed to break down into much smaller tasks, tasks that could be used across most of the languages. Some of these common tasks are :

- Parsing

- Chunking

- Tokenization

- Parts Of Speech Tagging

- Lemmatization

- Sentence Detection

- Named Entity Recognition

With the help of above common tasks, more complex NLP tasks like Document Classification, Language Detection, Sentiment Analysis, Document Summarization, etc. could be achieved.

Lets go into basic details of some of the Text Analytics and Artificial Intelligence applications where Natural Language Processing is used.

Language Detection

When you are expecting your text input from wide geographical variety of users, your first task, before you could derive any information, is detecting or guessing the language the text belongs to. Facebook posts, Twitter tweets or feedback to a company’s customer support could be in different languages. Facebook supports over 100 languages and Twitter supports around 56 languages. And a post or tweet hitting their server has to be detected for the language it belongs to out of these 100 possible languages they deal with.

Sentiment Analysis

Internet has become a common place where users share their experience with a person, thoughts about personalities and public figures, experience with their online purchase, travelling plans and many of such. And now, these personalities, public figures have become curious about their reputation. Online retail markets how happy their customer is with the service. Also Sentiment Analysis is being used in polarity detection where NLP understands if a given subject is positive, negative or neutral

Text or Document Classification

Categorizing or Classifying the document (containing text) into one of the multiple predefined categories is called Document Classification.

Tools or Libraries that implement Natural Language Processing tasks

Educational Institutions like Stanford, Open Community Development like Apache Software Foundation, Companies like Facebook, and many more have created libraries and tools to handle Natural Language Processing tasks.

Apache OpenNLP – by Apache Software Foundation

Apache OpenNLP is an opensource freeware that provides implementations to following tasks :

- Sentence Detector

- Tokenizer

- Name Finder

- Document Categorizer

- Parts-of-Speech Tagger

- Lemmatizer

- Chunker

- Parser

Stanford CoreNLP – by Stanford

Stanford CoreNLP provides implementations to following tasks :

- Tokenization

- Sentence Splitting

- Part of Speech Tagging

- Lemmatization

- Named Entity Recognition

- Constituency Parsing

- Dependency Parsing

- Co-reference Resolution

- Natural Logic Polarity

- Open Information Extraction

Fasttext – by Facebook

Fasttext is an opensource freeware that offers implementation to the following NLP tasks in much shorter training times and better performance.

- Word representation learning

- Text Classification

You may refer how to build Fasttext from source.